How much do you trust your data?

Karyn Thomas, Senior Manager, Technology Advisory at BDO, discusses how data quality is directly linked to trust and ultimately business success.

Close-shot of a tablet computer displaying financial data, three businessmen standing in the background

If you were to quantify your level of confidence in your data quality, what would it be? 80 per cent? 40 per cent? Even less? Or do you ‘blindly trust’ that it’s ok?

Were a client, customer or the business owner to ask you for a number, could you give them one? What would you tell them? Do you expect them to trust a response that hasn’t been quantified, and therefore can’t be benchmarked or monitored?

“Blind faith” and “gut feel” shouldn’t be concepts we rely on when describing the quality of our data in this day and age. It’s too important. Our KPIs and dashboards are built from data. Our sales, inventory, customer relationship and financial processes rely on this data. Our business owners and management make significant and important decisions under the influence of this data, like whether to sell a new product or service, or whether to change suppliers.

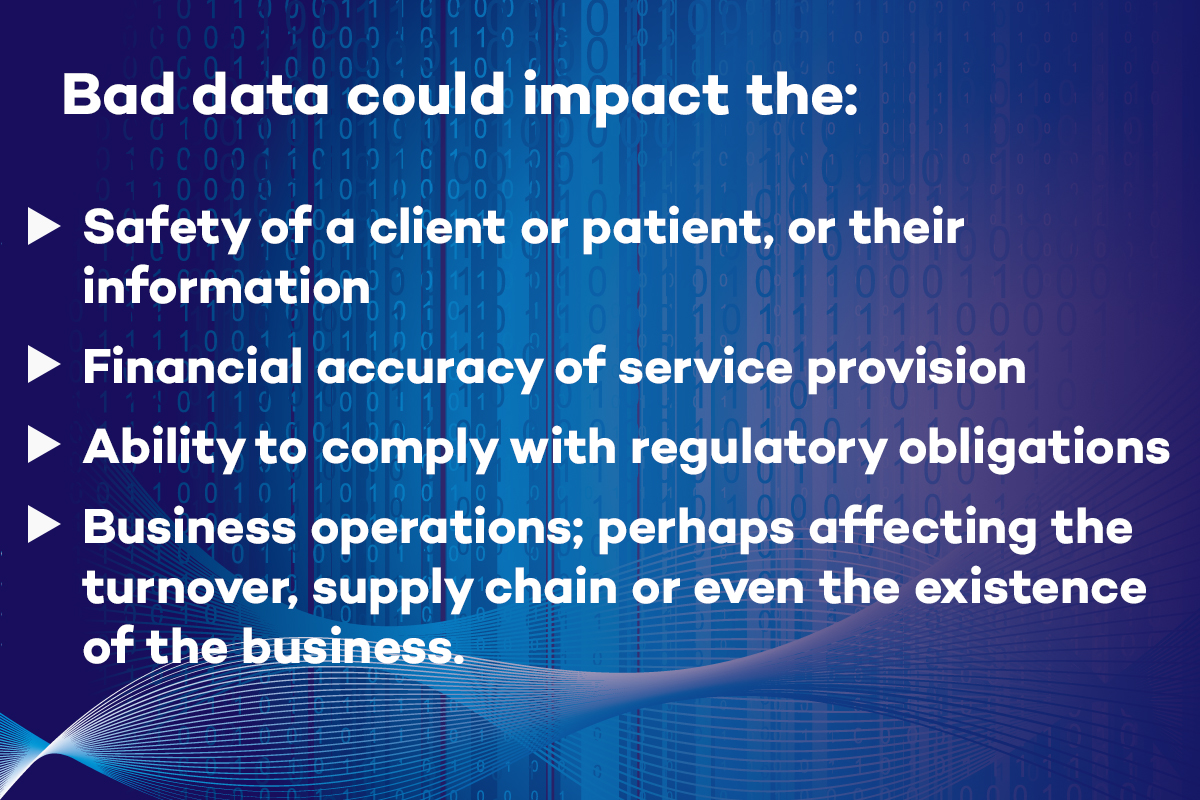

Big things can go wrong if this data is wrong. For example, imagine if the data for a legal client, a dental patient or a construction client was inaccurate, incomplete, missing, corrupt or unrecognisable to the operations and processes that consume them.

Alternatively, rather than a cataclysmic event resulting from poor data quality, a gradual erosion of trust in the data can mean businesses don’t use this valuable resource in any meaningful way.

What is trust?

Trust is a feeling of confidence and security. It’s to hope and expect that something is true. Essentially, it’s an emotional state.

Sometimes we can trust without any understanding of probability or any precise predictions. It just involves a positive feeling about something. To trust, we need to feel good about it.

Ideally, trust should also involve a logical element, based on data or evidence, where the validity and accuracy have been assessed and rated. In other words, proof.

Gathering your proof

This step is essential, so that when you are inevitably asked “What evidence do you have that our data quality is high?” and “How high is high enough?” and “How does that compare to industry benchmarks?” You will have the answer, and the proof to back it up.

Not having this proof available on demand when the risk impact is high is no longer acceptable because technology makes it straightforward to check and it’s simply expected by business owners or clients.

We all know how long it takes to build trust, and how quickly it can be broken. Evidence is the foundation to build on.

So, where do we start? The relative value of our data and the criticality of the systems that utilise it should be assessed, so we can prioritise our efforts on the data that needs it first. With our precious resources, we won’t be able to (nor should we) focus the same amount of attention on all of our data; some of it won’t be worth the effort.

We also need to assess the maturity of our existing data management processes against the appropriate maturity model, along with the quality of our data against our metrics, so that we know what our current state is.

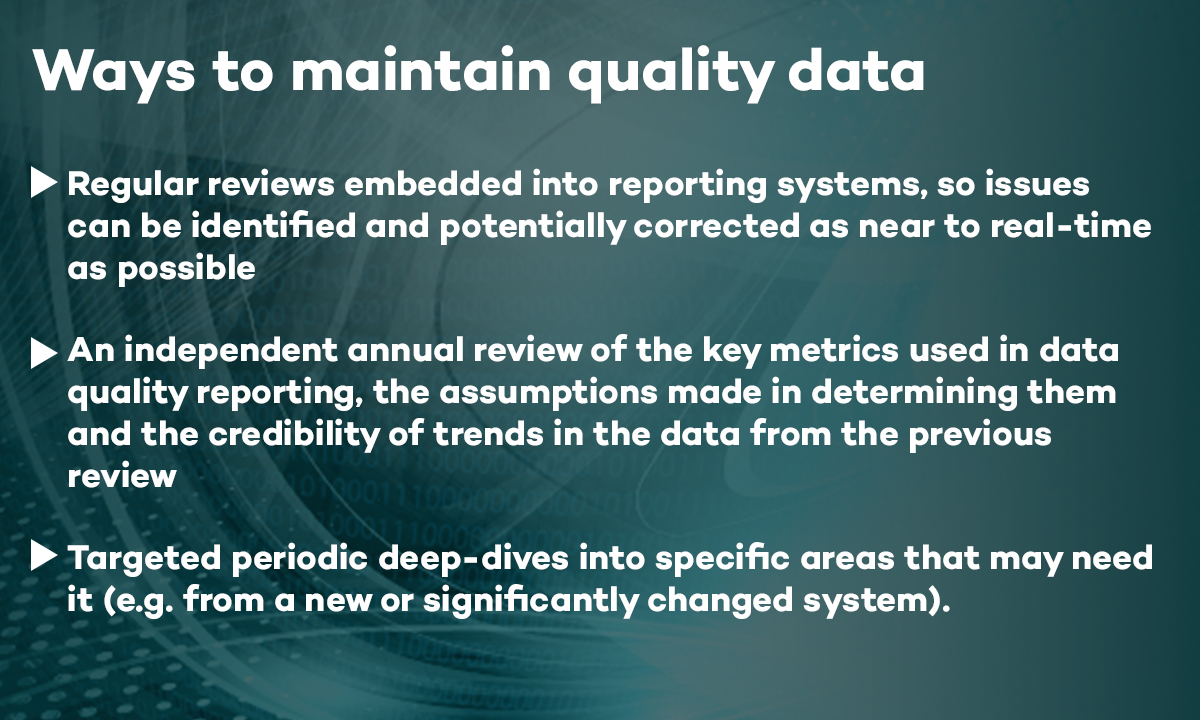

Once this has been done, we can then determine how high to set the quality benchmark and begin the process of improvement. Data Quality can be improved as a once-off, but the real benefits come when regular processes are put in place, particularly for critical data.

Maintaining high data quality is especially important to SMEs, not only because it underpins and impacts on critical business systems (for example: business intelligence, customer relationship management, inventory management and supply chain management). It’s also because SMEs can be more vulnerable than large organisations because of tight cashflows or low reserves.

Emerging businesses may have experienced a rapid period of growth or may not have had the opportunity or economies of scale to invest in robust and expensive systems. Relying more on the quality of people to compensate for immature data management processes leaves the business with a higher risk of data errors that could result in non-compliance, financial loss or reputational damage.

Poor data quality and poor data management practices can impede growth, which can be vital to ensuring the long term survival of SMEs.

Because SMEs drive innovation and competition, good data quality and efficient data management processes can become a key differentiator and provide competitive advantage, enabling business to be conducted cheaper and faster. Having poor quality data can impact the effectiveness of efficiency initiatives like automation.

It is one of the most important responsibilities for IT or Corporate Services and is an essential part of good governance and data management practices.

There are a number of frameworks and approaches to maintaining data quality, but checks on accuracy, completeness, validity, consistency, timeliness and availability of the data will usually be included.

Following the amount of attention given to data integrity in the media recently, business owners will be asking themselves if they feel comfortable with existing systems and quality checks. Is the data being used for decision making accurate, up to date, secure and relevant? Essentially, can it be trusted? This conscious shift in culture will surely have an impact on the perceived importance of Data Quality initiatives in businesses of all sizes.

To further discuss data management, data governance, data quality or business continuity planning, please get in touch with Karyn Thomas and the BDO Team.