SA tech can predict when people will likely die

Adelaide researchers have used artificial intelligence to predict when relatively healthy people will die – with alarming accuracy.

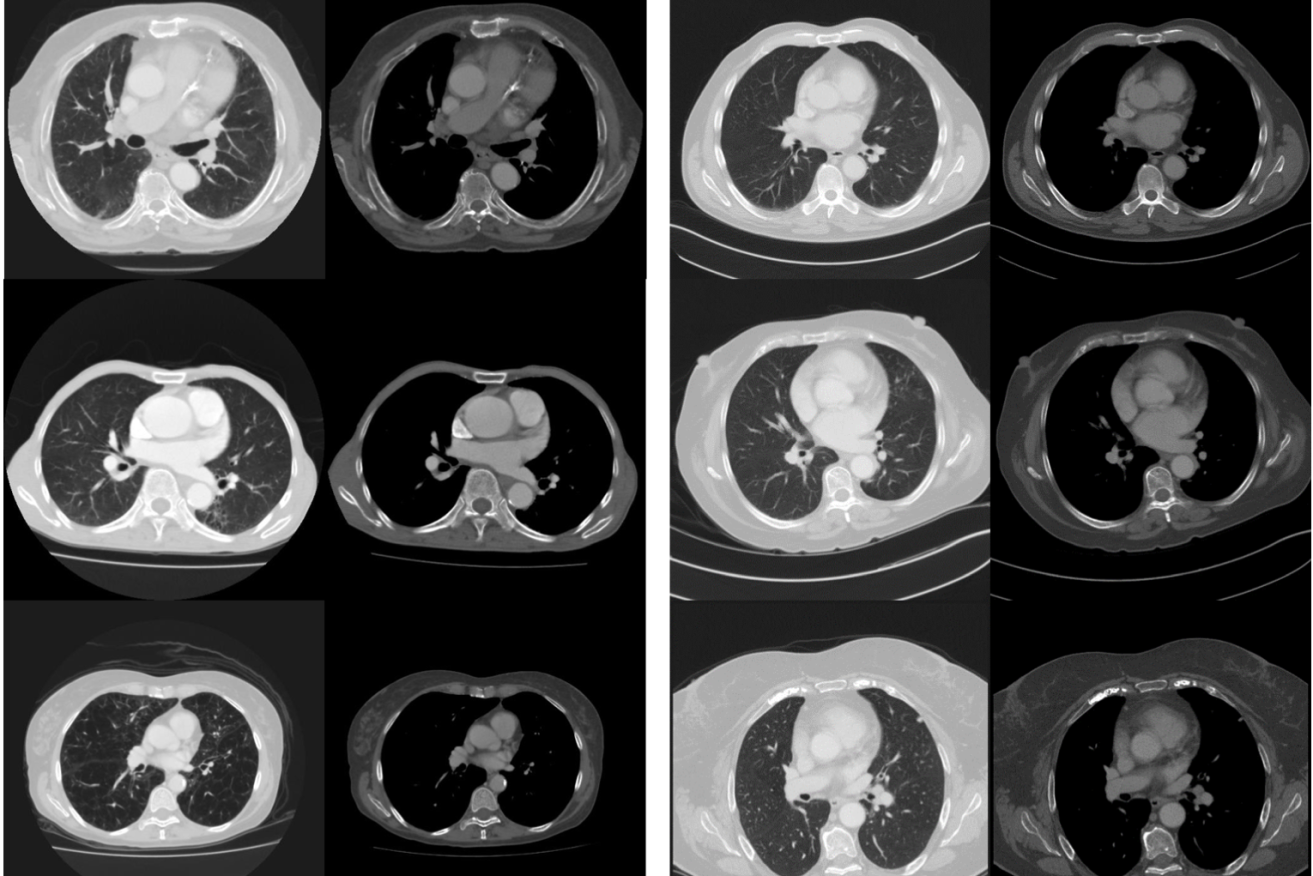

Research images of the coronary artery: the left side shows mortality cases with prominent visual changes of emphysema, cardiomegaly, vascular disease and osteopaenia. The survival cases (right side) appear visually less diseased and frail. Supplied image

Researchers at the University of Adelaide have used artificial intelligence to predict patients’ lifespans using only chest scans.

The research has implications for better, more precise treatment of individual patients with similar diagnoses – as well as potentially massive job losses in the medical profession.

The study, led by radiologist and PhD student Dr Luke Oakden-Rayner, used artificial intelligence to analyse CT scans from 48 patients’ chests.

“Deep learning” technology – the same category of artificial intelligence which operates driverless cars – was able to predict which patients would die within five years, with 69 per cent accuracy.

Oakden-Rayner told InDaily the research was a step towards “precision medicine”, where patients with the same or similar diagnosis can be separated into smaller subgroups and treatment can be more precisely tailored to individuals.

“By identifying patient subgroups, we can … better inform treatment decisions,” he said.

“Just trying to predict how long someone will live when they are reasonably healthy isn’t something we are [trained as doctors] to do.”

Oakden-Rayner said the technology was able to analyse images in a way that doctors cannot.

“Instead of focusing on diagnosing diseases, the automated systems can predict medical outcomes in a way that doctors are not trained to do, by incorporating large volumes of data and detecting subtle patterns.

“Our research opens new avenues for the application of artificial intelligence technology in medical image analysis, and could offer new hope for the early detection of serious illness.”

The study, published in the Nature journal Scientific Reports, is the first of its kind using medical images and artificial intelligence.

Asked whether he may be researching himself out of a job, Oakden-Rayner said it was likely many tasks now performed by doctors would in the future be done using artificial intelligence instead.

He said “deep learning” systems such as the one used in his study were particularly adept at generating useful conclusions from “things like looking, hearing and maybe even touching”.

“These systems can look in people’s eyes … look at moles and skin legions.

“In radiology, the vast majority of what we do is looking at images … there will be some replacement of a lot of tasks that doctors do.”

He said it was likely that advances in “deep learning” technology would make key areas of healthcare much cheaper, thereby increasing demand and, possibly, keeping doctors in a job.

“It may be that the increase in demand because [healthcare] suddenly becomes cheaper [means] doctors won’t lose their jobs.”

He added that there were large areas of medicine artificial intelligence is unlikely to disrupt – yet.

For example, he said, there would need to be “really significant advances in robotics” before anyone can be treated by an entirely automated, robotic surgeon.

The next stage of the team’s research will involve analysing tens of thousands of patient medical images.